Data is a driving force for companies looking to make informed decisions. But collecting and interpreting that data can be a huge hurdle for many.

When it comes to collecting, it’s not always as simple as buying data sets. For one, there need to be efficient ways to interpret the data. Additionally, data teams need to ensure that the data they are actually receiving is good data.

With big data and analytics being a huge market in 2023 (over $270 billion, according to some estimates), experts that specialize in interpreting and presenting data continue to be crucial for companies that use data to make informed decisions.

One such company is Bigeye, founded in 2019. I had the chance to speak with Kyle Kirwan of the company to learn more about what they do, how they do it, and the importance of high-quality data.

You can read the full interview below:

Care to introduce yourself and your role at Bigeye?

I am the co-founder and CEO of Bigeye. I started the company after leading a team at Uber that solved similar problems for their data team. In my career, I was one of the first analysts at Uber.

There, I launched the company’s data catalog, Databook, as well as other tooling used by thousands of their internal data users. Bigeye is a Sequoia-backed startup that works on data observability.

You can reach me on Twitter or LinkedIn.

In just a couple of sentences, what does Bigeye set out to accomplish?

We’re here to help data teams ensure their stakeholders have access to high-quality data running on reliable data pipelines, without wasting time on toilsome manual efforts.

Our data observability platform gives data engineers and scientists constant visibility into the layout of their data pipelines, the performance of each pipeline, and the quality of the data moving through them.

This enables them to detect, understand, and resolve problems in their pipeline before they reach analytics and machine learning applications.

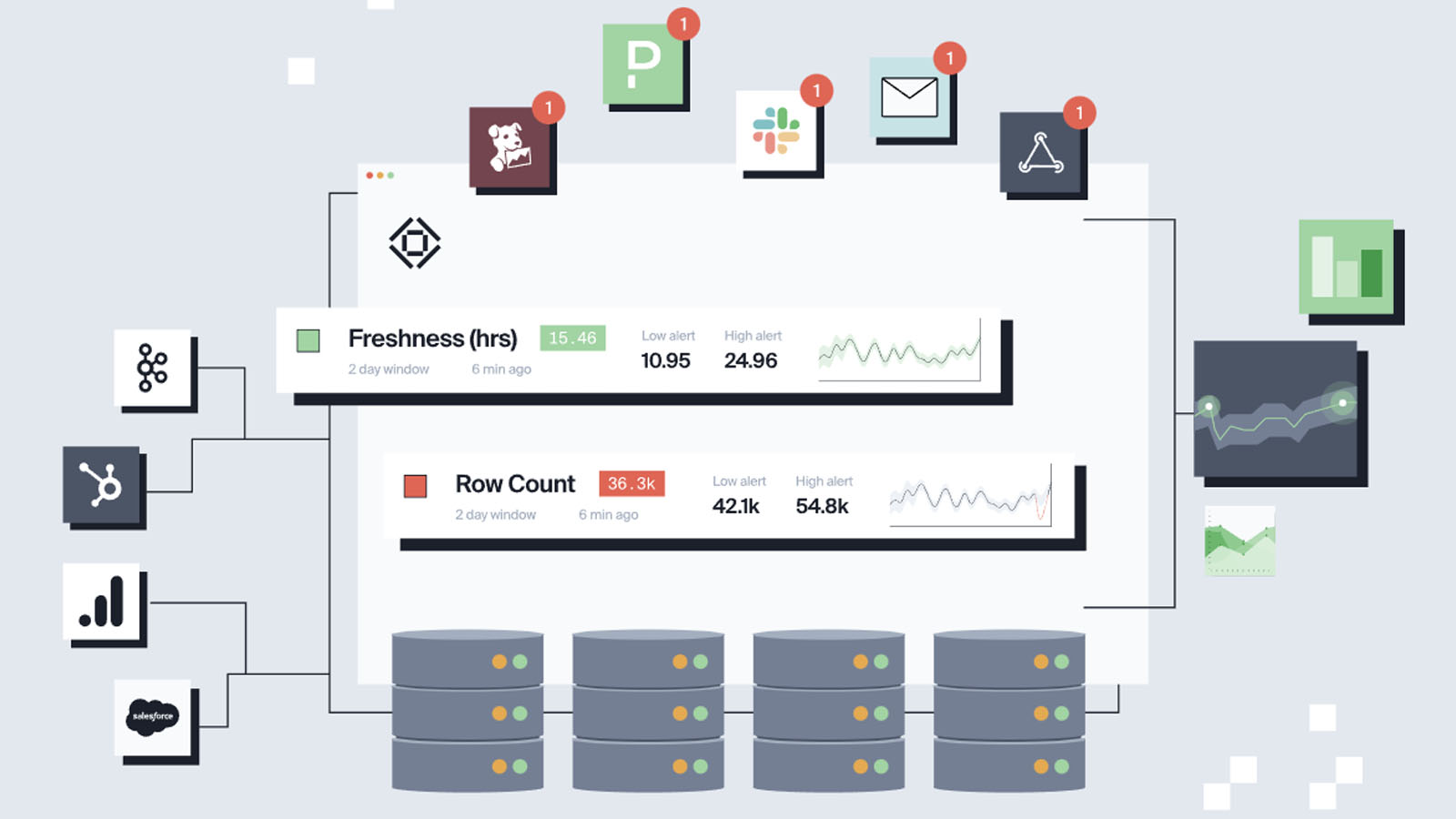

Image: Bigeye

What inspired the creation of the company?

I was on the receiving end of plenty of data quality issues when I was a data scientist, and Egor (Egor Gryaznov, co-founder of Bigeye) had to fix plenty of data pipeline failures as a data engineer. That’s where our empathy for the problem comes from.

The actual company creation was inspired by conversations with data science and analytics people who left Uber and missed the data testing, observability, and incident management tools we had.

We started to realize how important those tools had become and the fact that they didn’t exist outside of Uber and our peers at Airbnb and a few other companies.

I started at Uber as a data scientist in 2013, and watched many other data scientists, engineers, and analysts struggle as the company’s data grew from ~100 data sets to over 100,000 data sets.

It became difficult to know which data was accurate, reliable, up to date, etc. and we often had problems with analytics dashboards and machine learning models breaking due to issues with the data feeding them.

I became a product manager for our Data Platform organization, reporting to the head of data, and led a team to develop internal tools for dealing with those challenges at scale. Our tools were used by ~3000 people around the company each week to find data, know if it was high quality, and make sure our analytics and machine learning was working properly.

Bigeye is a way for me to continue that work but in a way that serves the broader market, not just Uber.

This is probably a loaded question, but just how important is active data monitoring?

It’s important, but it’s not the whole story! Monitoring is a key part of observability, but it’s like the meat in the middle of the sandwich. Instrumentation and Alerting are the two slices of bread.

Without instrumentation, you have no signals from your system to monitor, and without alerting to draw your attention to issues in the data pipeline, you have to remember to check your monitoring every day, which gets tiring.

The whole data observability framework is crucial for any business that relies on data (and that’s most businesses). Let’s put it this way – if you DON’T monitor data actively, here’s what can go wrong:

-

Wasted team time

-

A ballooning, labyrinthine data infrastructure that’s impossible to navigate

-

Eroded trust in the data

-

Reduced operational efficiency

-

Redundant efforts

-

Bad business decisions

-

Embarrassment in front of customers or adjacent teams

-

Millions in lost revenue in extreme cases

Besides data monitoring, does Bigeye offer customers any other services?

Yes! Bigeye also automates the instrumentation process (we automatically track metadata like freshness and number of rows about every table in a customer’s data warehouse).

We automate alerting (we developed an in-house anomaly detection system that outperforms simpler approaches like ARIMA or Meta/Facebook’s Prophet library in real-world testing).

Lastly, we assist with root cause analysis for the anomalies we detect (we automatically track data lineage and generate debugging queries that highlight specific rows of data affected by an anomaly).

Walk me through the process – at a very basic level, how does Bigeye monitor data for anomalies and alert customers of issues?

First, we connect to customer data sources just like an analytics tool like Tableau or a transformation tool like dbt would.

Next, we index all the data inside those sources so it can be explored by the customer, similar to a data catalog.

Then we start automatically gathering table-level metadata about freshness, row count, and data lineage.

Then we use data profiling and our in-house recommendation algorithm to suggest more detailed column-level instrumentation (like missing values, outliers, and correct data formats) that we think users should apply.

And now that all those signals about their tables and columns are being tracked, we train anomaly detection models (this happens in minutes) for each of those attributes that can alert users to issues with each attribute.

Users can get those alerts in email, Slack, Microsoft Teams, etc. and based on how they react to them, the models get feedback that makes them more accurate over time, the more the user responds to our alerts.

Is your service intended for enterprise-level businesses or can SMBs and individuals benefit from Bigeye’s data monitoring?

We typically work with organizations of 1,000 or more employees, and who have 50+ total people working on data engineering, data science, and analytics.

But sometimes we work with customers smaller than this, in industries like finance. Even a small organization might have a lot riding on their data, for example when using quantitative trading strategies.

Where do you see the future of big data heading over the next 10 years?

Now that infrastructure is easier to spin up initially and scale up over time (thanks to companies like Snowflake, Databricks, Confluent, Astronomer, and dbt Labs), I think the emphasis is increasingly on data operations.

This story played out inside Uber at a highly accelerated pace. Once we had all this great scalable data infrastructure that was open-access within the company, it became a challenge to manage it all.

That’s where tools like Databook (our data catalog), Trust (our data testing system), and DQM (our data observability system) came from.

Companies are less and less excited about HAVING data, and now the focus is on USING data.

Anything you would like to add before we wrap up the interview?

I love that data has become more woven into society and the general conversation. I hope that results in us collectively demanding more from organizations when it comes to how responsibly they treat the data society is collectively generating.